Designing HVAC systems for data centres

June 22, 2022

By Andrew Snook

Efficiency is very important.

For companies that want to maintain complete control of their infrastructure, data centres are often a key component. That said, they are also energy-intensive and can be costly to maintain, so designing an efficient HVAC system for them is extremely important.

There are several key factors to consider when designing an HVAC system for data centres.

“We see environmental impact, initial capital cost and capital cost being primary considerations when deciding on cooling methodologies,” says Dave Ritter, product manager for Johnson Controls. “Finding the right balance to meet space constraints, PUE (power usage effectiveness) and WUE (water usage effectiveness), while remaining scalable and cost-effective, has become more challenging in recent times.”

Michael Strouboulis, director of business development for data centres at Danfoss, says the most important items to consider are rack density, the surrounding environment, uptime requirements and the targets for PUE.

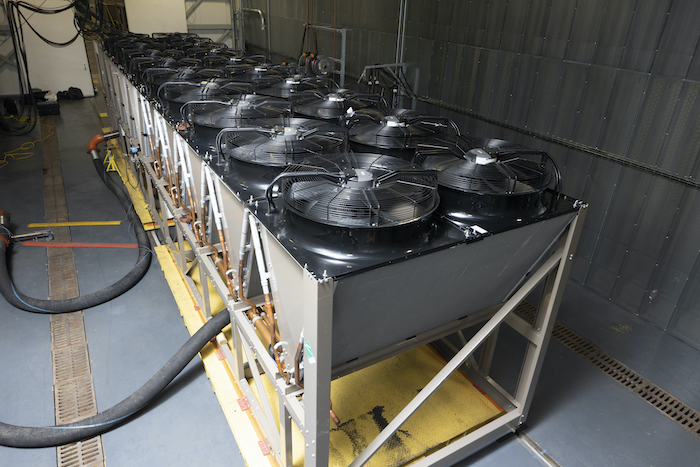

“Rack density and outside temperatures will determine whether an air or liquid cooling system should be used. To meet uptime requirements, it is essential that the sub-components within the cooling equipment are of high quality, high reliability, highly engineered and tested components – such as compressors, heat exchangers, motors, fans, flow and automatic controls. Technologies such as oil-free compressors can eliminate known cooling system failure modes, such as failures due to loss of lubrication of oiled compressors,” he says.

Since data centres are high energy users and we live in an era where businesses are expected (and sometimes legally obligated) to reduce carbon emissions and lower their energy costs by improving efficiency, there is a push toward sustainable data centres, Strouboulis says.

“Increasingly, consulting engineers are expected to design for improved data centre operational efficiency and reduced power consumption and to employ equipment that decreases the carbon emissions of the data halls,” he says, adding that PUE, WUE and energy reuse effectiveness (ERE) all should be considered in the design process. “There is an opportunity to improve ERE by capturing and reusing the heat generated by the data centre using energy transfer stations and district energy loops for commercial, residential and industrial heat consumers nearby.”

Common mistakes

One of the more common mistakes sometimes made when designing a data centre is not taking into consideration the entire system as a whole.

“The components in a data centre HVAC system must work together; therefore, the entire system must be considered in the design process,” Strouboulis says. “For example, specifying a balanced chilled water hydronic loop in the data centre is just as important as the chiller or cooling tower or variable speed pump specifications. Pressure-independent control valves and smart actuator specifications help eliminate hotspots, a very common issue, by delivering chilled water where it is needed most, in the racks with the highest loads, and help reduce commissioning time significantly. And if they are equipped with sensors that collect data about the hydronic loop, they can dynamically optimize its operation in real time.”

Another pitfall is going with a design that does not permit flexibility in allowable rack density.

“As IT equipment is replaced and upgraded over time, rack density will change. Data centre operators will be at a major disadvantage if the racks are not re-configurable for different rack density, potentially resulting in inadequate cooling,” Strouboulis says. “A future-proof design will use components that can automatically adjust operational characteristics based on the local rack needs. And pay attention to cable management. An incorrect configuration could cause unintended blockage of air flow in the rooms.”

Ritter adds that some components in HVAC systems have a 15- to 30-year life, which far exceeds the useful life of the IT equipment in the data hall, so it’s important to ensure HVAC investments are future-proofed.

“For example, as thermal guidelines and rack density continues to expand, consider if designs are adaptable to take advantage of a changing landscape,” he says.

Another common mistake is when companies take any unoccupied room in their office (mailrooms, empty office, janitorial closets), convert it into a data closet and rely on the existing HVAC infrastructure for climate control.

“This approach may be the right choice in terms of square footage needed, but when it comes to proper climate conditions for sensitive IT equipment, it could not be more wrong. At best, these spaces are cooled using only the building’s AC system. At worst? An open window,” says Herb Villa, senior applications engineer at Rittal. “A building’s existing air conditioning system – or combined heat and air conditioning system – is designed to create comfortable environments for employees; the reason they are sometimes referred to as ‘comfort systems.’ When IT racks need to be placed somewhere on-site, it’s thought that ‘any old room’ will do because AC ductwork usually terminates in these spaces. However, the reality is that even if you were to add ducts to supplement the building’s AC, relying on a system designed for humans is not a good solution for IT equipment.”

Villa says there are five enclosure climate control challenges to consider that create hidden risks when relying on a building’s HVAC system:

- Contaminants: A repurposed space can be exposed to airborne dust, gases and moisture that seep into the room and compromise the quality of the air and the performance of the equipment; these may not be adequately removed from the room using only the existing AC.

- Reliability/redundancy: Even a short interruption in power supply to computer equipment can lead to loss of data and the same is true for interruptions in cooling. Most buildings do not have redundant cooling in place and often an AC system breakdown can last hours, a costly risk for IT equipment.

- Comfort systems cycle on and off: The temperature in the closet will decrease when the cooling system is on and increase when it is off, resulting in temperature swings throughout the day that can stress the equipment more than a consistent higher temperature. Moreover, the issue is not only related to daily temperature swings, but more sustained periods that put the equipment outside the zone. Comfort cooling systems are often programmed for higher temperature set points on weeknights and weekends to conserve energy. The average temperature within a server closet will generally increase by the amount the temperature set point is increased.

- Combined heating and cooling HVAC systems deliver heat in winter: The same ductwork that supplies cool air to the IT closet in warmer months will deliver heated air in colder months. This almost guarantees overheating of the equipment and increases the risk of equipment failure.

- Inability to scale: Every kilowatt of power used by the IT equipment creates a kilowatt of heat that must be removed. If you were to add an additional rack and more equipment, the existing HVAC system would be even less capable of maintaining the ideal temperature.

Design tips

Strouboulis offers the following recommendations for designing the system to be as energy efficient as possible:

- Keep current and future energy density in mind. This will aid in selecting the right equipment initially and help avoid unnecessary and often sub-optimized expansion later.

- Consider free cooling in order to benefit from higher operating temperatures allowed by IT equipment manufacturers.

- Centralize the cooling instead of using multiple modular units to increase overall energy efficiency. However, this must be analyzed against the need for varying cooling needs over different racks.

- Use adiabatic cooling to provide cooling without compression. This needs to be done with due consideration of water usage (for example, using oil- free high-pressure pumps with very-fine-misting nozzles instead of water spray grids).

Sustainable solutions

Since data centres need to operate 24 hours a day, seven days a week, 365 days a year, they are extremely energy intensive. Strouboulis says to create more sustainable data centres, engineers must consider PUE, WUE and carbon usage effectiveness (CUE).

“With the trend toward low-GWP and low-density refrigerants, Danfoss and other manufacturers have developed compatible compressors, heat exchangers, sensors and flow controls,” he says. “Regions with favourable climate conditions are ideal for the adoption of free cooling techniques. Danfoss and others have gasketed plate heat exchangers for liquid-to-liquid free cooling as well as components for evaporative and adiabatic coolers for data centres, using specially designed oil-free high-pressure pumps and nozzles for very fine water misting that saves water.”

Recovering heat from data centres and providing this heat for industrial or district energy needs is an important step in sustainability, Strouboulis adds.

“Avenues for simple heat recovery with liquid-to-liquid heat exchangers or heat pumps that provide useful heat to district heating or water heating applications are worth considering when designing data centres.”